Tuesday 25, April

13:30-15:00

ST1: State of the Art in Methods and Representations for Fabrication-Aware Design

15:30-17:00

ST2: From 3D Models to 3D Prints: an Overview of the Processing Pipeline

Thursday 27, April

9:00-10:30

ST3: A Comparative Review of Tone-Mapping Algorithms for High Dynamic Range Video

13:30-15:00

ST4: Intrinsic Decompositions for Image Editing

15:30-17:00

ST5: Perception-driven Accelerated Rendering

Tuesday 25, April

ST1: State of the Art in Methods and Representations for Fabrication-Aware Design

Amit Haim Bermano, Thomas Funkhouser, Szymon Rusinkiewicz

Session details: Tuesday 25 April, 13:30 - 15:00 Room: Rhône 2

Computational manufacturing technologies such as 3D printing hold the potential for creating objects with previously undreamed-of combinations of functionality and physical properties. Human designers, however, typically cannot exploit the full geometric (and often material) complexity of which these devices are capable. This STAR examines recent systems in the computer graphics community in which designers specify higher-level goals ranging from structural integrity and deformation to appearance and aesthetics, with the final detailed shape and manufacturing instructions emerging as the result of compu- tation. It summarizes frameworks for interaction, simulation, and optimization, as well as documenting the range of general objectives and domain-specific goals that have been considered. An important unifying thread in this analysis is that different underlying geometric and physical representations are necessary for different tasks: we document over a dozen classes of rep- resentations that have been used for fabrication-aware design in the literature. We analyze how these classes possess obvious advantages for some needs, but have also been used in creative manners to facilitate unexpected problem solutions.

ST2: From 3D Models to 3D Prints: an Overview of the Processing Pipeline

Marco Livesu, Stefano Ellero, Jonas Martínez, Sylvain Lefebvre, Marco Attene

Session details: Tuesday 25 April, 15:30 - 17:00 Room: Rhône 2

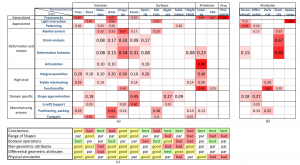

![]()

Due to the wide diffusion of 3D printing technologies, geometric algorithms for Additive Manufacturing are being invented at an impressive speed. Each single step, in particular along the Process Planning pipeline, can now count on dozens of methods that prepare the 3D model for fabrication, while analysing and optimizing geometry and machine instructions for various objectives. This report provides a classification of this huge state of the art, and elicits the relation between each single algorithm and a list of desirable objectives during Process Planning. The objectives themselves are listed and discussed, along with possible needs for tradeoffs. Additive Manufacturing technologies are broadly categorized to explicitly relate classes of devices and supported features. Finally, this report offers an analysis of the state of the art while discussing open and challenging problems from both an academic and an industrial perspective.

Thursday 27, April

ST3: A Comparative Review of Tone-Mapping Algorithms for High Dynamic Range Video

Gabriel Eilertsen, Rafal Mantiuk, Jonas Unger

Session details: Thursday 27 April, 9:00 - 10:30 Room: Rhône 1

Tone-mapping constitutes a key component within the field of high dynamic range (HDR) imaging. Its importance is manifested in the vast amount of tone-mapping methods that can be found in the literature, which are the result of an active development in the area for more than two decades. Although these can accommodate most requirements for display of HDR images, new challenges arose with the advent of HDR video, calling for additional considerations in the design of tone-mapping operators (TMOs). Today, a range of TMOs exist that do support video material. We are now reaching a point where most camera captured HDR videos can be prepared in high quality without visible artifacts, for the constraints of a standard display device. In this report, we set out to summarize and categorize the research in tone-mapping as of today, distilling the most important trends and characteristics of the tone reproduction pipeline. While this gives a wide overview over the area, we then specifically focus on tone-mapping of HDR video and the problems this medium entails. First, we formulate the major challenges a video TMO needs to address. Then, we provide a description and categorization of each of the existing video TMOs. Finally, by constructing a set of quantitative measures, we evaluate the performance of a number of the operators, in order to give a hint on which can be expected to render the least amount of artifacts. This serves as a comprehensive reference, categorization and comparative assessment of the state-of-the-art in tone-mapping for HDR video.

ST4: Intrinsic Decompositions for Image Editing

Nicolas Bonneel, Balazs Kovacs, Kavita Bala, Sylvain Paris

Session details: Thursday 27 April, 13:30 - 15:00 Room: Rhône 1

Intrinsic images are a mid-level representation of an image that decompose the image into reflectance and illumination layers. The reflectance layer captures the color/texture of surfaces in the scene, while the illumination layer captures shading effects caused by interactions between scene illumination and surface geometry. Intrinsic images have a long history in computer vision and recently in computer graphics, and have been shown to be a useful representation for tasks ranging from scene understanding and reconstruction to image editing. In this report, we review and evaluate past work on this problem. Specifically, we discuss each work in terms of the priors they impose on the intrinsic image problem. We introduce a new synthetic ground-truth dataset that we use to evaluate the validity of these priors and the performance of the methods. Finally, we evaluate the performance of the different methods in the context of image-editing applications.

ST5: Perception-driven Accelerated Rendering

Martin Weier, Mickael Stengel, Thorsten Roth, Piotr Didyk, Elmar Eisemann, Martin Eisemann, Steve Grogorick, André Hinkenjann, Ernst Kruijff, Marcus Magnor, Karol Myszkowski, Philipp Slusallek

Session details: Thursday 27 April, 15:30 - 17:00 Room: Rhône 1

Advances in computer graphics enable us to create digital images of astonishing complexity and realism. However, processing resources are still a limiting factor. Hence, many costly but desirable aspects of realism are often not accounted for, including global illumination, accurate depth of field and motion blur, spectral effects, etc. especially in real-time rendering. On the other hand, there is a strong trend towards a larger number of pixels per display due to larger displays, higher pixel densities or larger fields of view. Also, more bits per pixel (high dynamic range, wider color gamut/fidelity), increasing refresh rates (better motion depiction), and an increasing number of displayed views per pixel (stereo, multi-view, all the way to holographic or lightfield displays) are observable trends in current display technology. This development causes significant technical challenges due to aspects such as limited compute power and bandwidth which are yet unsolved. Fortunately, the human visual system has certain limitations which suggest that providing the highest possible visual quality is not always necessary. In this report, we present the key research and models that exploit the limitations of perception to tackle visual quality and workload alike. Moreover, we present the open problems and promising future research targeting the question how we can minimize the effort to compute and display only the necessary pixels while still offering full visual experience to a user.

STAR Chairs

Matthias Zwicker, University of Bern, Switzerland and University of Maryland, College Park, USA

Victor Ostromoukhov, University of Lyon, France