Motion Capture

This work aims to capture shape, motion and appearance from single or multiple cameras video streams in unconstrained environments.

Objectives

This part of our research addresses the problem of acquiring geometric models, appearance models and motion of humans from single or multiple images with the following constraints:

-

provide algorithms adapted to real time applications

-

using low cost peripherals

-

working in presence of multiple capture targets

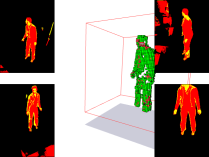

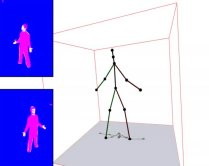

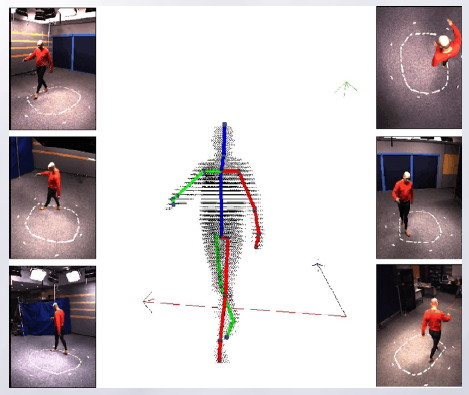

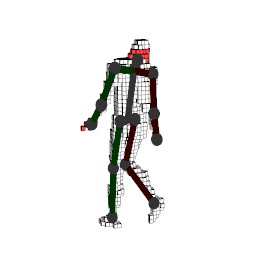

Full body shape and motion acquisition

We are interested in the automatic acquisition of 3D movement of people. This operation must be performed without specialized equipment (markers or special dressing) to make its general use, under the constraint of real time. This condition is necessary for interactive applications.

Members involved: Erwan Guillou, Saïda Bouakaz

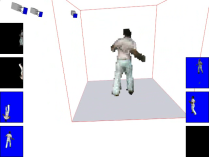

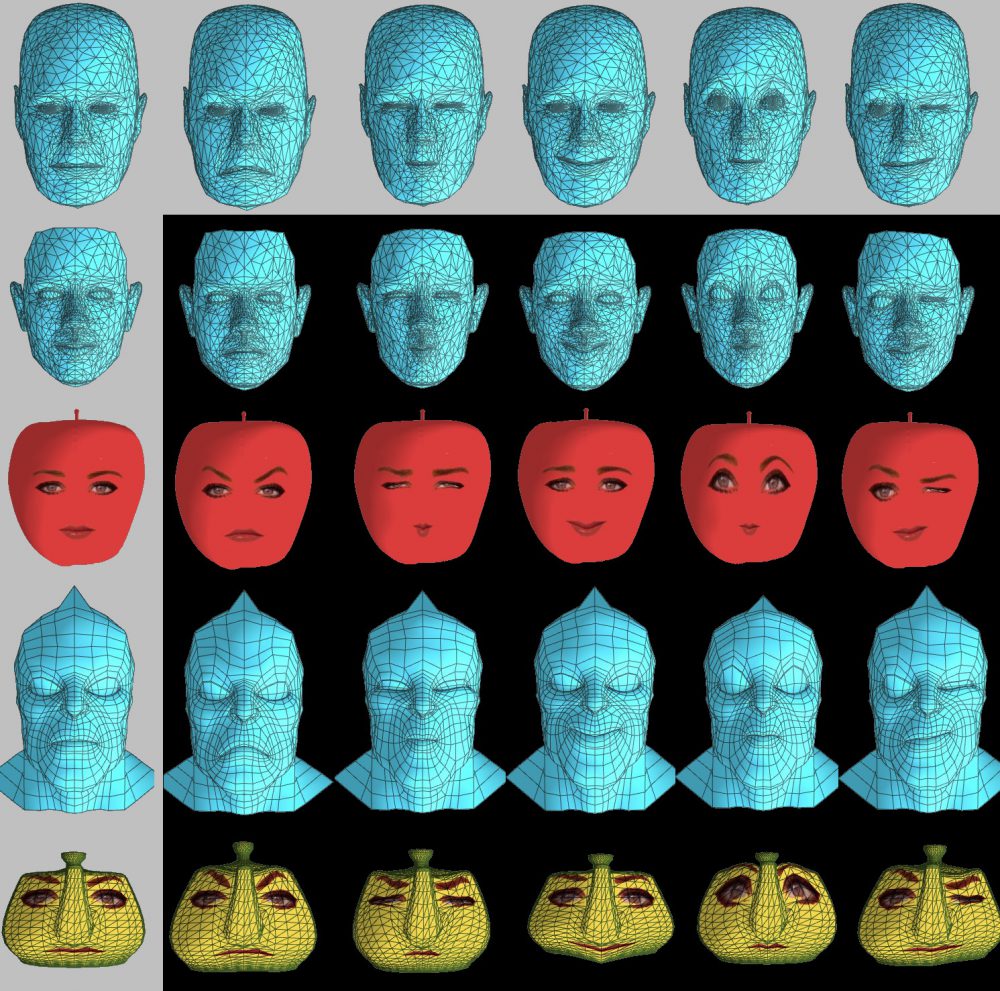

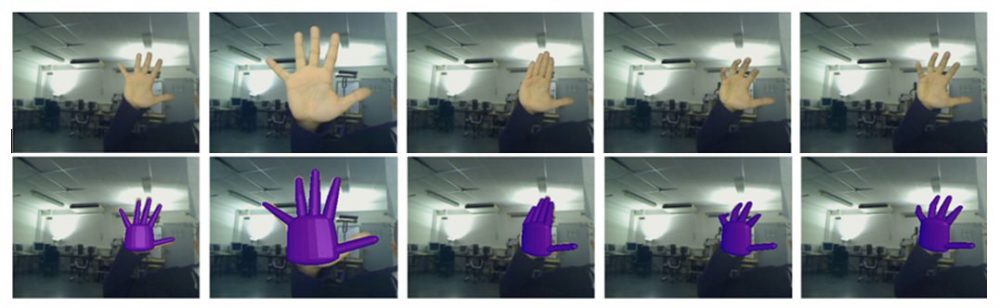

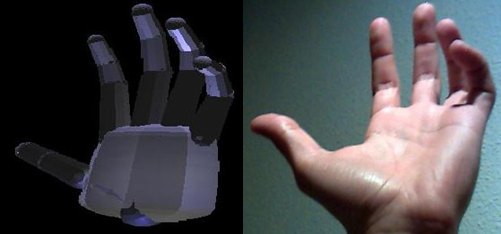

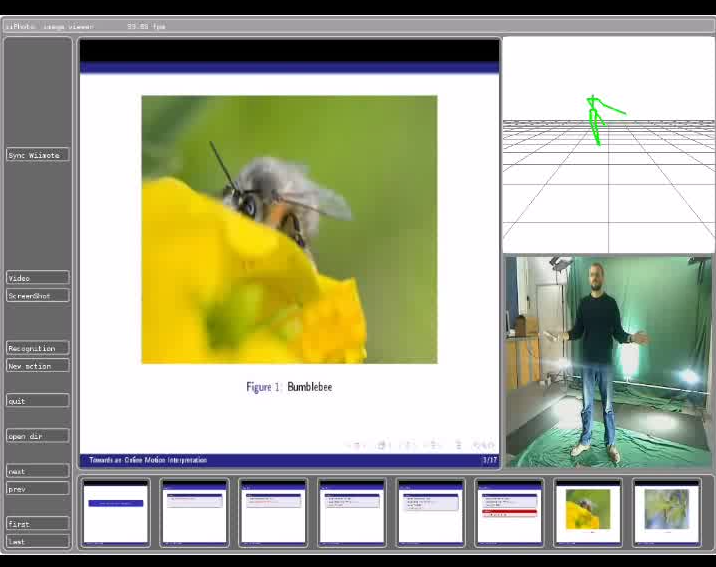

Hand and face motion acquisition

This work aims to capture fine joints of the person in particular those of the hand or of the face (wrinkle). For the face, in addition to a single camera large scale motion capture, our work proposed to use the shading information to reconstruct the wrinkles of the skin. For the hand, some initial work has enabled us to develop an acquisition system that capture simple movements of the hands. In a second time, we worked on the problem of fingers self-occlusion, problem that exists even in capture system using markers.

Members involved: Alexandre Meyer, Saïda Bouakaz

Analyse: from recognition to synthesis

Our goal is to analyze, adapt and reproduce motion or movement, taking into account the influence of emotional state and physical environment of the captured human. To achieve this, we work on the analysis of physical and semantic aspects of movement and the synthesis of computer animations: procedural animation, constrained animation, etc.

Objectives

Various applications are treated in this topic. Among these, we can cite:

-

Interpretation of movement in an everyday environment

-

Real-time recognition of motions and actions

-

Edit and adapt computer animation to its surrounding environment

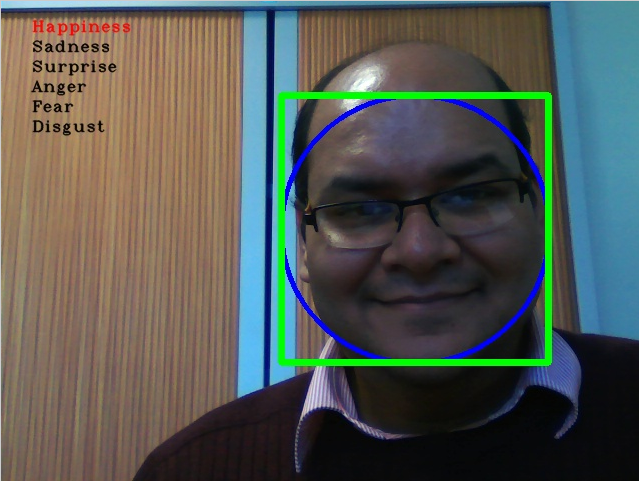

Motion and emotion recognition

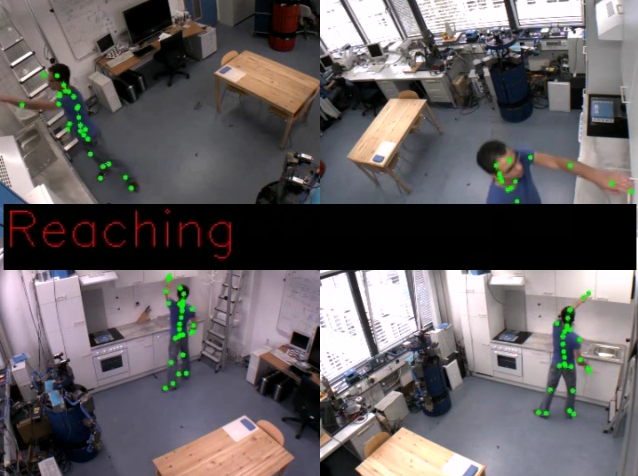

We aimed at proposing motion recognition methods related to the exemplar paradigm, where the training is made with few instances of 3D motions. This choice allows to tackle huge database for training, and proposes a solution to recognize ongoing actions. We propose two complementary solutions, with real-time, precision and extensible constraints:

-

first one is based on the analysis of articulations trajectories

-

second one deals with invariant sequences in actions

For emotion recognition, our work takes inspiration from the human vision we have studied with different experiments. These studies provided us some rules to analyse only discriminant parts of the face. This combined with efficient descriptors merging color and shape information allows to recognize emotion in real time also on low resolution images.

Members involved: Erwan Guillou, Alexandre Meyer, Saida Bouakaz

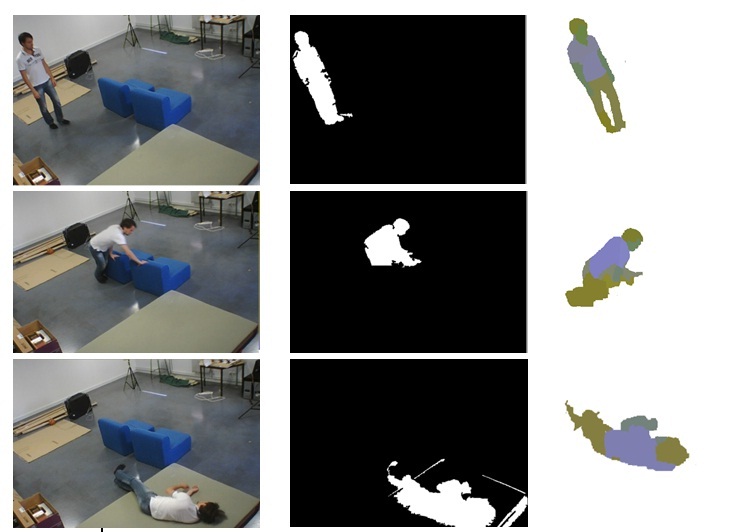

Situation analysis

Objective of this work is to provide tools to identify an abnormal situation for a person using motion detection and analysis of his actions. This may allow among other things to detect risky situations (fall, distress, …) and send alerts to decision-making processes. Elements of study are as follows:

-

detection and adaptive extraction of the person in its usual environment from video stream

-

identifying parts of the body

-

analysis of posture and motion tracking

-

situation analysis using predefined scenarios

Members involved: Elodie Desserée, Saida Bouakaz, Erwan Guillou